Ignorance occurs when the outcomes are not known (or predicted); uncertainty occurs when the outcomes are known (or predicted) but the probabilities are not; and risk occurs when the probabilities of outcomes are thought to be known.

I realize I have previously written that all forecasts can be 100% accurate and to stop apologizing for uncertainty. But you are probably creating risk without even realizing it. Think about a decision you made in the past that you were totally convinced was right. Or that time you were sure that the forecast was going up or down and the exact opposite occurred. What did you miss? What you may have missed was the human factor and the error you created.

Irrational behavior can cause consumers to act independently and create variation but it may also impact you, the forecaster, in creating your own bias. Face it, we can be our own worst enemy and if we want to make the forecast better, you may want to remember these eight mental traps and common biases to avoid.

8 Biases That Forecasters Fall Victim To

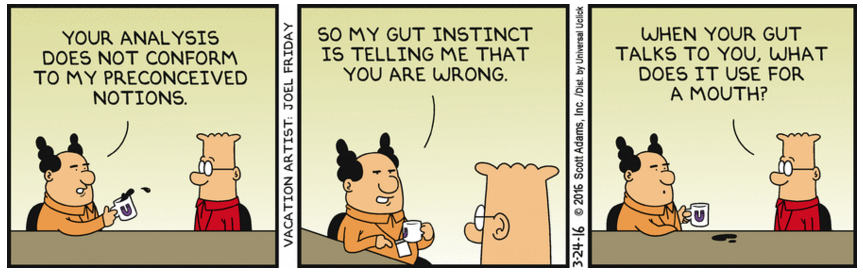

1 -Trust Me Bias: The tendency to interpret information in a way that confirms one’s preconceptions, more commonly known as conformation bias. It is one of the most common types of bias — and one that we all fall victim to because the data often “feels right.” This happens a lot when people have an idea of what should happen, finding a way to get the models or data to agree with their ideas and rationalize it afterwards. The misconception is that your predictions or opinions are a result of rational, objective analysis. In truth, your opinions are more often the result of focusing on information that confirmed what you believed, while ignoring information which challenged your preconceived notions.

2 – Overfitting: This involves an overly complex model that describes noise (randomness) in the data set rather than the underlying statistical relationship. You may be surprised how common overfitting is and many people (or their forecasting systems) do it unknowingly every day. This occurs when you allow your system to choose “best-fit” modeling for time series data. With dozens of models with infinite parameters and enough time you can fit a model to almost any data set. But there’s no guarantee that the model will generate good forecasts or even if it should be used at all.

3 – Anchor Bias Setting an anchor is used more in negotiations where the value of an offer is highly influenced by the first relevant number (the anchor) that starts the negotiation. It can also generate bias when your boss or sales executive says from the outset “I think we may be up 10% next period”. Anchors, whether on purpose or accidental, are extremely influential. They create subtle psychological cues that influence what results you come up with that just happen to be close to 10% up for the next period.

4 – Innovation bias. This looks at innovation with a bias that it must be an improvement. Cognitive studies have shown that human beings tend to over-emphasize both similarities and differences between new and old things they are appraising. For our purposes, this can be in evaluating new data or models compared to older ones. Of course, the new ones must be better right? We tend to overvalue a new model and accept new data usefulness, and undervalue their shortcomings.

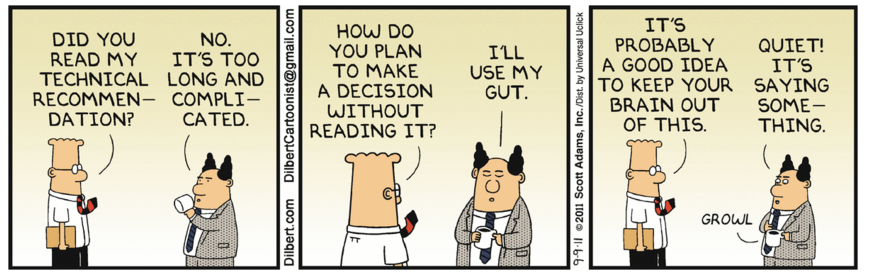

5 – Black Box Bias This is somewhat the opposite of innovation and it’s based on a simple premise: “If I don’t understand it, it must be wrong.” This is the idea that if you don’t understand where the number came from or you didn’t add your qualitative judgement to it, then it can’t be right. Don’t be distracted by your ego – accept that this time you just may not be adding value. In Michael Gilliland’s book “”, Michael highlights a study by Steve Morlidge. After studying over 300,000 forecasts, he found that a staggering 52% of the forecasts were worse than using a naïve forecast or random walk.

6- Complexity bias: This is the belief that the more elaborate and numerous the inputs, the better the results. With this you believe that unless you are forecasting at the customer/item/location by hour and adding assumptions from every salesperson and factoring in the weather for the next five days etc., you don’t have a forecast. The forecasting process can be degraded in various places by the biases and personal agendas of participants. The more elaborate the process, with more human touch points, the more opportunity exists for these biases to taint what should be a simple and objective process.

7 – Modelling bias: This is the tendency to skew data and predictive models by starting with a biased set of assumptions about the problem. This leads to selection of the wrong variables, the wrong data, the wrong algorithms and the wrong metrics. This can also be over-trusting a process because it worked well once. The danger here should not be misjudged; analytical processes must be constantly fine-tuned to remain effective. To lock in on an analytical process because it delivered a positive result, forgoing continuous fine-tuning and critical scrutiny of the results, is asking for trouble.

8 – (n=all) bias: The last one on our list is somewhat obscure, but it happens to the best of us. We can become focused on information or trends or details that really have no effect on the outcome we are pursuing. This bias is hard to root out because it’s often a good thing to use everything we know or even toss unknowns into the input of a data mining operation. One problem is that, with enough data to examine, eventually you’ll find a statistically significant relationship where no such relationship exists. Another problem is that even if you find the answer, it becomes difficult to understand what provided the answer.

Finally, if those are not bad enough, we cannot forget about the worst one of all, the “I already knew all this” bias. This one gives you the false sense of security that these don’t impact you and create a blind spot and a failure to recognize and allow for your own biases. To solve this one, you must admit you don’t know what you don’t know and that you can fool even the smartest person you know – yourself.