Forecast accuracy has always been measured, but now it is becoming a key performance indicator (KPI) for many supply chains. But are we measuring the right thing? Most companies use forecasting performance metrics, such as Mean Absolute Percent Error (MAPE), to determine how good the forecasts are. The problem with metrics such as MAPE is they only communicate the magnitude of error.

Other metrics, such as Mean Percent Error (MPE) or other tracking signals as a trend, can only communicate the direction of the error, or bias. The problem is that neither one really reveals the complete picture, nor do they answer the simple question, “is it good enough?” This is where FVA plays a critical role. To measure everything, we need to add FVA as an additional metric to help gauge the effectiveness of the process or the performance of the forecasting professional.

What Gets Measured Can Be Improved

MAPE gives some measurement of forecast error. This is not a bad thing, and for supply chains it is critical to have visibility and understand the degree of error so that the organization can properly manage it. For most companies, this is used to set inventory targets or understand the risks of their capital investments.

Unfortunately, many of these companies also set arbitrary MAPE targets for what they would like to see the forecast accuracy be in order to hit a subjective inventory target. Because the MAPE targets are arbitrary, companies don’t understand the drivers or their underlying true variability. From a process standpoint, the problem is that one of two things will occur: the company hits the accuracy targets and is satisfied, and then little or no other improvements happen; or, they never hit the targets and become frustrated, never understanding why they can’t get there. Here is another way to look at this: while forecasts and measuring accuracy help mitigate inefficiencies in the supply chain, they do little to reflect how efficiently (or indeed why) we are achieving that forecast accuracy in the first place.

Measuring Forecast Value Added

FVA begets managing forecast processes. Forecast Value Added increases visibility into the inputs, and provides a better understanding of the sources that contributed to the forecast, so one can manage their impact on the forecast properly. Companies can use this analysis to help determine which forecasting models, inputs, or activities are either adding value or are actually making it worse.

FVA also helps to set targets and understand what accuracy would be if one did nothing, or what it should or could be with a better process. Finally, its objective is efficiency: to identify and eliminate waste in non-value adding activities from the forecasting process, thereby freeing up resources to be utilized for more productive activities.

What Is Forecast Value Added?

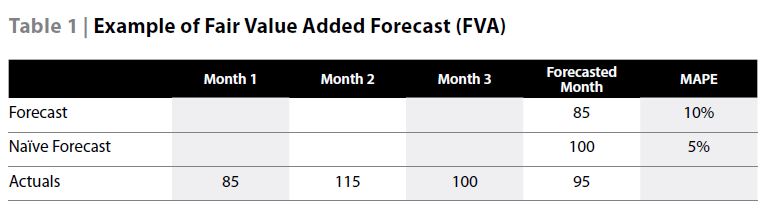

FVA can be defined this way: “The change in a performance metric that can be attributed to a particular step or participant in the forecasting process.” Let’s say we have been selling approximately 100 units a month, and sold exactly that many last month. Through the forecasting process and added market intelligence, our consensus forecast for the next month came to 85 units. Actuals for the next month came in at 95 units. For this example, after management and marketing adjustments, the MAPE was 10%, where a naïve forecast may have achieved a MAPE of 5%. We could say in this case that the adjustments have not added value since the naïve was lower by five percentage points. (See Table 1)

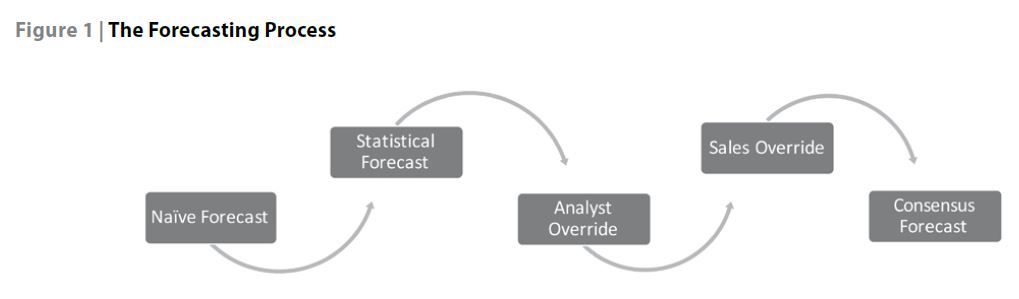

In conducting FVA analysis, we do not need to stop there, and we can make it as simple or complex as needed to evaluate our process. FVA can be utilized to determine the effectiveness of any touch point in the forecasting process. A company might start with a naïve forecast; however, this kind of comparison can be made for each sequential step in the forecasting process. One can compare the statistical forecast to a naïve forecast, or evaluate the value of causal inputs, sales overrides, or the consensus forecasting process.

In our analysis above, we might find, for example, that the statistical forecast is worse than the naïve forecast either driven by something in the time series data or tweaks that were made in the parameters of the models we are using. We may also see that the overall process is adding value by bringing all the inputs together to a consensus, but the sales and marketing inputs are negatively biased, which are impacting the final numbers.

One of the best ways to measure if the process is adding value is to utilize FVA, and determine if the forecast proves to be better. Better than what though? The most common and fundamental test in FVA analysis is not only comparing process steps used in forecasting but to also compare the forecast against the naïve forecast.

What Is The Naive Forecast?

As per the Institute of Business Forecasting (IBF) Glossary, a naïve forecast is something simple to compute, requiring a minimum amount of resources. The key is something simple, and traditional examples are random walk (no change from the prior period where the last observed value becomes the forecast for the current period), or seasonal random walk (“year over year” using the observed value from the prior year’s same period as the forecast for the current period).

Although it seems simple, determining the naive forecast is never that easy. The best way to determine the baseline or naive forecast to measure against, one needs to remember what the primary task is and what happens if forecasting does not achieve it.

We might like to believe that if we, as forecasters, were to suddenly disappear, all the companies’ activities would come to a halt and be paralyzed, not knowing how to plan for the future. The truth is, life will go on without us and items will be produced, inventory will be built, materials will be ordered, and investments will be made. That is the key and what I like to measure against.

If you did nothing, what numbers would the company use to function? They may not call it a naive forecast, but what you generally find out is, in the absence of an expert forecast signal, a company will go with what they have, what they know, and what is simplest to get. Some may use a moving average of what was sold in the past few months, or even simpler, what was sold last month (random walk). For others who know there is inherent seasonality, they may take the sales from last year and plan against that (seasonal random walk).

Still others have budgets or financials that are locked in and, without a better signal, are what the company would plan to. The goal is to find how a company and its supply chain tend to look at its business. Is it reactionary, seasonal, top down, or an entirely different approach? How does that translate into how they would plan without a forecasting professional or process in place?

One is left with the organization’s naïve forecast. This can be the traditional random walk, or a simple moving average, or a financial projection. I have even seen some companies use the statistical baseline from their forecasting system as the naïve. None of these approaches is wrong. The best answer is the baseline forecast that takes the least amount of effort at little or no cost or resources and, I would add, drives the supply chain without the influences of the forecasting process.

Another very common and overlooked benchmark is what most re-order points and inventory targets are set. Many companies, even with a good forecast, still exclude forecast variation from their calculations and look at the coefficient of variation (COV) to measure the variation of historic demand to set policies, in essence using a naïve forecast to set policy. While this is not used as a forecast, it is a lens you can use to compare your overall forecast performance to the Demand Variation Index (DVI). The Demand Variation Index utilizes the calculation similar to the Coefficient of Variation by measuring the ratio of absolute standard deviation or percent of inherent variation to the mean or average demand.

The output of DVI will be a percentage of normal inherent variation as a percentage that can be compared to MAPE provided by your FVA. Commonly used in forecasting to see if the forecast error or variation from actual demand over time is greater than normal variation, it stands to reason that an improved DVI is better at predicting demand.

So now that we have determined the baseline or naive forecast, a reasonable expectation is that our forecasting process (which probably requires considerable effort and significant resources) should result in better forecasts. Until one conducts FVA analysis, it may not be known. Unfortunately, we have seen time and time again that many organizations’ forecasts are worse than if they just used a naïve forecast. In the book by Michael Gilliland, Len Tashman and Udo Sglavo, Business Forecasting: Practical Problems and Solutions, the authors highlight a recent study by Steve Morlidge.

After studying over 300,000 forecasts they found that a staggering 52% of the forecasts were worse than using a random walk. A growing amount of qualitative evidence would lead us to a similar conclusion: as the systems, inputs, and processes have become more elaborate and complex, the results of the forecast have not generated much better results. For all of the collaboration, external data, and fancy modeling, I would not be surprised if half the time we still are not bettering the naive forecast.

What one needs to do is focus on the steps and inputs, and simplify the process to what is working and use the inputs that add value. This way we could better focus our organization’s resources, money, and effort on the primary objective, which is improving forecast accuracy. If only we had quantitative evidence or a way to measure the different steps or inputs in a forecasting process and conclude we were adding value…

Putting FVA To Work

Forecasting is both a science and an art. Companies can employ standard algorithms to help generate a forecast, but it still takes a skilled practitioner to put the numbers together into a coherent form. As we have seen, measuring the effectiveness of that forecast is also a process with both science and art as well.

Much like the concept of FVA being a “lean” principle that helps identify what is adding value, utilizing FVA is not meant to generate unneeded excess work. Look at a simple approach to measuring and analysing your current forecast processes, and find the best ways to integrate FVA to improve the inputs and process you already have. A great place to start is by mapping each of the main sequential steps in your current forecasting process, and then tracking the results at each of those aggregate steps. A common process could include steps as shown in Figure 1.

From here, you can incorporate and use FVA in your analysis much like you use any forecast metric. Also, it is important to maintain some of the same principles as other metrics. First, understand that one data point does not make a trend. Just because you have one period with a negative FVA doesn’t mean we should fire our forecaster. Anomalies can occur in processes and inputs. Just as anomalies can happen with data analysis, they also happen with FVA analysis. Like most metrics, it needs to be evaluated over time. The same way we look at forecast accuracy, FVA viewed over time can be used to identify positive or negative trends and bias in inputs or steps. Next, I would recommend looking at sub-processes or inputs in the steps that need the most attention. If the statistical forecast is consistently adding value and it is the overrides that are interjecting variation into the process, then begin with the overrides.

For example, you may find that Sales is attempting to re-forecast the numbers every month instead of providing true inputs or overrides. Using FVA, we have already determined that the statistical baseline is effective, and now the purpose of gathering inputs should not be to validate the statistical model or calculations, but to include selective information that may be available but not reflected in historical data.

In this case, FVA can serve as an effective sales training tool. We don’t want Sales to spend their resources regenerating an entire forecast to try to correct the forecast; rather we want them to improve upon it. We already know and can demonstrate that we have a solid statistical baseline forecast from our system. We have a baseline that most likely knows better than they do about seasonality, the level, trend, and data driven events.

What we want to know is what we don’t know, so we can make minor inputs or overrides into the forecast, either up or down, in our baseline prediction. The sales training tool comes in the FVA as a feedback loop to those inputs to help identify what inputs work or don’t work, and the scale of adjustments needed to create value in the forecasting process.

Finally we need to look at the process as a whole again. In order to determine if a forecasting step or input is adding value, it is not enough to simply look at it as an isolated item; rather, it is best to look at it as an intelligent combination of inputs and processes. Extending this further, different inputs (or the same) combined and aggregated differently can be thought of as different forecasts and, as such, provide different insight.

The final question for us is not whether each of these inputs adds value; rather if each of these inputs can be combined in a meaningful way to create a better forecast that effectively integrates process, inputs, and analytics with the planner’s expertise. At the end of the day, our goal is to make a forecast more accurate and reliable so that it adds value to the business.

The Bottom Line

Increasing forecast accuracy is not an end in itself, but it is important if it helps to improve the rest of the planning process. Reducing forecast error and variability via FVA analysis can have a big impact on service, inventory, and cost for an organization. Each time we’re adding 2 percent forecast value added, that 2 percent means something in dollars. That’s why we add FVA analysis to help measure our process and show the value proposition for any process changes you’re considering.

This article first appeared in the Journal of Business Forecasting (JBF), Spring 2016 issue. To receive the JBF and other benefits, become an IBF member today.